GSA SER Verified Lists Vs Scraping

GSA SER Verified Lists vs Scraping: Finding the Right Targets for Your Campaign

When running GSA Search Engine Ranker, one of the most critical decisions is where your links will come from. The backbone of any successful campaign is the target list, and two popular methods dominate the conversation: using pre-made GSA SER verified lists or scraping your own fresh targets. Both approaches aim to feed the engine with URLs that accept backlinks, but they differ radically in quality, effort, and results. Understanding the contrast between GSA SER verified lists vs scraping will help you avoid wasting resources and build a more effective link profile.

What Are GSA SER Verified Lists?

A GSA SER verified list is a curated file of URLs that have already been tested with the software. These lists are typically generated by experienced users who run massive campaigns, identify targets that actually allowed a submission or registration, and then export the confirmed links. Because each URL on a verified list has already passed through the engine’s posting process, you skip the unknown phase and load targets that are proven to work for specific platform types like blog comments, trackbacks, forums, or article directories.

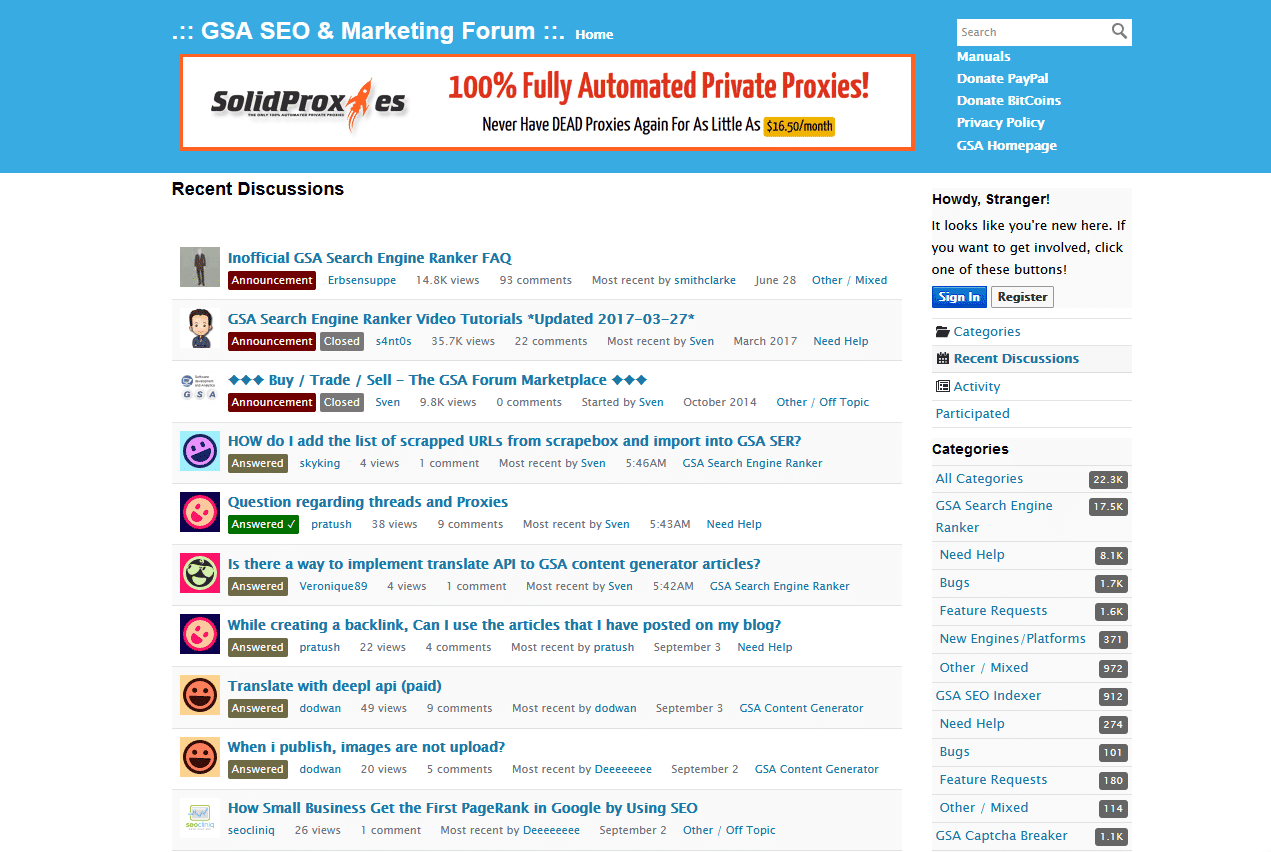

Verified lists are often bought from private vendors, shared within SEO communities, or built from your own previous runs. The main selling point is efficiency: instead of letting GSA SER waste time on sites that reject submissions, block automated tools, or have broken forms, you feed it a clean, pre-screened pool of targets. This can dramatically speed up your verified links per minute and reduce the load on proxies and captcha solving.

What Is Scraping for GSA SER?

Scraping refers to the process of generating target URLs in real time by harvesting search engine results. GSA SER uses your chosen keywords, footprint strings, and search engines to discover new, website potentially unposted sites. The scraper sends queries like “powered by wordpress†+ “leave a comment†to Google, Bing, or custom engines, parses the results, and attempts to post on each URL it finds. Scraping means you always work with fresh, unspammed sites that haven’t been hammered by other GSA SER users, which can be a massive advantage for tiered link building and avoiding instant bans.

However, scraping comes with significant overhead. It consumes proxy bandwidth, requires a constant pool of quality proxies, and demands intelligent keyword and footprint selection. Because scraped targets are completely untested, a large percentage will fail—due to aggressive anti-spam measures, nofollow attributes, or simply because the scraped page isn’t really a posting endpoint. The engine must spend time attempting each URL before discarding it, which can lower your overall verified link ratio if not optimized properly.

GSA SER Verified Lists vs Scraping: Key Differences

Quality and Success Rate

With a well-maintained verified list, the success rate per target is exceptionally high. Since every entry was previously verified, you can expect a large chunk of attempts to result in a successful submission. Scraping, on the other hand, yields a mixed bag. Even carefully built footprints can return a high percentage of false positives—pages that claim to accept comments but have closed comments, require login, or use JavaScript challenges. The quality battle in GSA SER verified lists vs scraping is decisively won by lists when you need consistency and speed. For fresh, unspammed backlinks with higher potential for indexability, scraping often wins out.

Time and Effort

Using a verified list is essentially plug-and-play. You load the file, set your project to use it, and the engine focuses on posting rather than searching. Scraping demands upfront work: researching effective footprints, rotating user agents, fine-tuning search engine selection, and monitoring for blocks. It’s an ongoing task because search engines constantly change their algorithms and penalize scraping behavior. If you value minimal setup and immediate results, the verified list route is far more efficient. If you are willing to invest time to harvest unique resources, scraping gives you long-term control.

Customization and Scalability

Scraping allows granular targeting. Need only French-language guestbooks or .edu forum profiles? You can tailor your footprints to match exactly that. Verified lists are often generic or platform-grouped; you can sort by engine type but can’t easily limit them to a specific niche or region unless the list compiler did that work. When you compare GSA SER verified lists vs scraping for building a contextually relevant tier 1, scraping offers unmatched precision. For massive tier 2 or tier 3 blasts where volume matters more, a diverse verified list scales faster without proxy expenses ballooning.

Cost Considerations

Good verified lists aren’t free; private, regularly updated lists often come with a price tag. However, they can save considerable money on proxies and captcha solving since every attempt is productive. Scraping is free to perform if you use public footprints, but the hidden costs add up: you’ll burn through residential or mobile proxies quicker, encounter more captchas, and may need multiple search engine APIs. A poorly managed scraping setup can cost more in proxies than simply buying a premium verified list.

Which Approach Should You Choose?

There’s no universal winner in the GSA SER verified lists vs scraping debate—it depends entirely on your goals and resources. If you need rapid link velocity, lower running costs, and a reliable base of target platforms, integrating high-quality verified lists into your workflow is a smart move. They shine for bottom-tier pyramids and quick indexing passes. If you prioritize link uniqueness, want to avoid leaving footprints, and need site-specific contextual relevance, mastering scraping is essential.

A hybrid strategy often yields the best outcome. Start with a robust verified list to get immediate results and then supplement with constant scraping of fresh URLs. That way, you combine the instant success rates of pre-tested targets with the freshness and diversity of new discoveries. Regularly test your scraped targets with a debug mode, turn the best performers into your own private verified lists, and keep refining your footprints. By balancing both methods, you transform the verified lists vs scraping dilemma into a powerful, adaptive link building engine.